Statistics for Decision Makers - 11.05 - Type I and Type II Errors

Jump to navigation

Jump to search

Prerequisites

Questions。

- What are Type I and Type II errors?

- How to interpret significant and non-significant differences?

- Why the null hypothesis should not be rejected when the effect is not significant

Simplification 。

Let us assume that null hypothesis is always about something being not different

- Example

- The new application is as popular as the old one (there is no difference in popularity)

- The new hardware is as fast as the old one (there is no difference in speed)

- The drug doesn't cure the disease (it makes no difference to patient's health)

Overview。

| State of the world | There is no difference | There is a difference |

|---|---|---|

| Null hypothesis (no difference) | Rejected (there is a difference) |

Not Rejected (there is no difference) |

| Error | Type I False Alarm False Positive |

Type II Missed Detection False Negative |

Example 1。

- The patient has a disease

- There is a problem

| State of the world | Patient doesn't have cancer | Patient has cancer |

|---|---|---|

| What we tell the patient | has cancer (H0 rejected) |

no cancer (H0 not rejected) |

| Error | Type I False Alarm False Positive |

Type II Missed Detection False Negative |

Example 2。

- The new hardware is different from the old one

- Customers are more satisfied with the new hardware than the old one

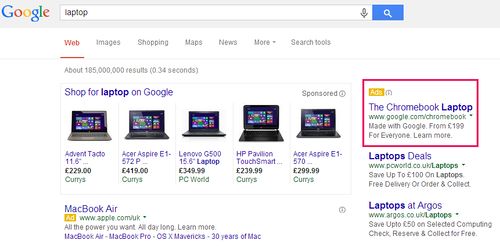

- There is an increase in sales after running an AdWords campaign

| State of the world | No difference between new and old (no difference) | There is a difference between old and new(difference) |

|---|---|---|

| Null hypothesis (no difference) | Rejected (difference) | Not Rejected (no difference) |

| Error | Type I False detection |

Type II Missed Detection |

Supplier vs Customer

Type I error。

- Type I error is a rejection of a true null hypothesis

- False Positive or False Alarm can be used instead in business world

- Rejecting the null hypothesis is not an all-or-nothing decision

- The Type I error rate is affected by the α level: the lower the α level the lower the Type I error rate

Probability of Type I error。

- It might seem that α is the probability of a Type I error

- However, this is not correct

- Instead, α is the probability of a Type I error given that the null hypothesis is true

You can only make a Type I error if the null hypothesis is true.

Type II error。

- Type II error is failing to reject a false null hypothesis

- Unlike a Type I error, a Type II error is not really an error

- When a statistical test is not significant, it means that the data do not provide strong evidence that the null hypothesis is false

Lack of significance does not support the conclusion that the null hypothesis is true

- Therefore, a researcher would not make the mistake of incorrectly concluding that the null hypothesis is true when a statistical test was not significant

- Instead, the researcher would consider the test inconclusive

- Contrast this with a Type I error in which the researcher erroneously concludes that the null hypothesis is false when, in fact, it is true

A Type II error can only occur if the null hypothesis is false

- If the null hypothesis is false, then the probability of a Type II error is called β

- The probability of correctly rejecting a false null hypothesis equals 1- β and is called Power

- Power is the probability of being able to find a difference if it really exists

Errors and Decision Making。

- Increasing the significance level increases the chances of False Alarm and decreases the chances of Missed Detection

- To decrease the chances of both errors, the sample size has to be increased

What is more serious?。

- A False Alarm is usually more serious, as the test is conclusive

- A Missed Detection usually is less harmful e.g.

- If a test failed to detect a disease in a patient, it can be repeated

- On the other hand, if treatment is given for a misdiagnosed problem, the consequences could be worse

- When selecting the alpha level which is related to probability of a False Alarm, it is important to keep in mind what is more harmful:

- If administering treatment, even to a healthy patient, is cheap and not harmful,

- but not detecting the disease of a sick patient would be dangerous

- than setting significance level (alpha) high (e.g. 5%) is the right thing to do

- If administering treatment, even to a healthy patient, is cheap and not harmful,

Supplier v Customer。

- We cannot calculate the probability of a Type II error without knowing the true state of the world

- A reduction in the probability of a Type I error will increase the chance of a Type II error

- Google v Hard Drive provider

- Hard-drives are provided to the cloud by Company X (supplier) to Google

- H0 is that all hard drives comply with the specifications provided by the supplier

- In other words, the hard drives Google buys were drawn from the population of the hard drives complying with the spec

- Google tests a sample of the hard drives to see if they are compliant using Hypothesis Testing

- If a Type I error occurs, it is to the supplier's detriment, since the hard drives are fine but will be rejected by Google (after testing)

- If a Type II error occurs, Google accepts drives which are not up to the standard

- The sample size can be increased, but that will increase the cost of testing as well

- There is a trade-off between the reduction of errors and the cost of sampling

Supplier v Customer Solution。

- Google and Company X (supplier) can set up alpha and beta to the level where the probability of both errors is the same

- This can be achieved by changing the sample size (sometimes decreasing it), and changing the significance level (alpha)

Quiz。

Quiz